Preface

In order to draw things in 2D, we usually rely on lines, which typically get classified into two categories: straight lines, and curves. The first of these are as easy to draw as they are easy to make a computer draw. Give a computer the first and last point in the line, and BAM! straight line. No questions asked.

Curves, however, are a much bigger problem. While we can draw curves with ridiculous ease freehand, computers are a bit handicapped in that they can't draw curves unless there is a mathematical function that describes how it should be drawn. In fact, they even need this for straight lines, but the function is ridiculously easy, so we tend to ignore that as far as computers are concerned; all lines are "functions", regardless of whether they're straight or curves. However, that does mean that we need to come up with fast-to-compute functions that lead to nice looking curves on a computer. There are a number of these, and in this article we'll focus on a particular function that has received quite a bit of attention and is used in pretty much anything that can draw curves: Bézier curves.

They're named after Pierre Bézier, who is principally responsible for making them known to the world as a curve well-suited for design work (publishing his investigations in 1962 while working for Renault), although he was not the first, or only one, to "invent" these type of curves. One might be tempted to say that the mathematician Paul de Casteljau was first, as he began investigating the nature of these curves in 1959 while working at Citroën, and came up with a really elegant way of figuring out how to draw them. However, de Casteljau did not publish his work, making the question "who was first" hard to answer in any absolute sense. Or is it? Bézier curves are, at their core, "Bernstein polynomials", a family of mathematical functions investigated by Sergei Natanovich Bernstein, whose publications on them date back at least as far as 1912.

Anyway, that's mostly trivia, what you are more likely to care about is that these curves are handy: you can link up multiple Bézier curves so that the combination looks like a single curve. If you've ever drawn Photoshop "paths" or worked with vector drawing programs like Flash, Illustrator or Inkscape, those curves you've been drawing are Bézier curves.

But what if you need to program them yourself? What are the pitfalls? How do you draw them? What are the bounding boxes, how do you determine intersections, how can you extrude a curve, in short: how do you do everything that you might want to do with these curves? That's what this page is for. Prepare to be mathed!

Virtually all Bézier graphics are interactive.

This page uses interactive examples, relying heavily on Bezier.js, as well as maths formulae which are typeset into SVG using the XeLaTeX typesetting system and pdf2svg by David Barton.

This book is open source.

This book is an open source software project, and lives on two github repositories. The first is https://github.com/pomax/bezierinfo and is the purely-for-presentation version you are viewing right now. The other repository is https://github.com/pomax/BezierInfo-2, which is the development version, housing all the code that gets turned into the web version, and is also where you should file issues if you find bugs or have ideas on what to change or add to the primer.

How complicated is the maths going to be?

Most of the mathematics in this Primer are early high school maths. If you understand basic arithmetic, and you know how to read English, you should be able to get by just fine. There will at times be far more complicated maths, but if you don't feel like digesting them, you can safely skip over them by either skipping over the "detail boxes" in section or by just jumping to the end of a section with maths that looks too involving. The end of sections typically simply list the conclusions so you can just work with those values directly.

What language is all this example code in?

There are way too many programming languages to favour one of all others, soo all the example code in this Primer uses a form of pseudo-code that uses a syntax that's close enough to, but not actually, modern scripting languages like JS, Python, etc. That means you won't be able to copy-paste any of it without giving it any thought, but that's intentional: if you're reading this primer, presumably you want to learn, and you don't learn by copy-pasting. You learn by doing things yourself, making mistakes, and then fixing those mistakes. Now, of course, I didn't intentionally add errors in the example code just to trick you into making mistakes (that would be horrible!) but I did intentionally keep the code from favouring one programming language over another. Don't worry though, if you know even a single procedural programming language, you should be able to read the examples without any difficulties.

Questions, comments:

If you have suggestions for new sections, hit up the Github issue tracker (also reachable from the repo linked to in the upper right). If you have questions about the material, there's currently no comment section while I'm doing the rewrite, but you can use the issue tracker for that as well. Once the rewrite is done, I'll add a general comment section back in, and maybe a more topical "select this section of text and hit the 'question' button to ask a question about it" system. We'll see.

Help support the book!

If you enjoyed this book, or you simply found it useful for something you were trying to get done, and you were wondering how to let me know you appreciated this book, you have two options: you can either head on over to the Patreon page for this book, or if you prefer to make a one-time donation, head on over to the buy Pomax a coffee page. This work has grown from a small primer to a 100-plus print-page-equivalent reader on the subject of Bézier curves over the years, and a lot of coffee went into the making of it. I don't regret a minute I spent on writing it, but I can always do with some more coffee to keep on writing!

What's new?

This primer is a living document, and so depending on when you last look at it, there may be new content. Click the following link to expand this section to have a look at what got added, when, or click through to the News posts for more detailed updates. (RSS feed available)

November 2020

Added a section on finding curve/circle intersections

October 2020

Added the Ukranian locale! Help out in getting its localization to 100%!

August-September 2020

-

Completely overhauled the site: the Primer is now a normal web page that works fine with JS disabled, but obviously better with JS turned on.

June 2020

Added automatic CI/CD using Github Actions

January 2020

Added reset buttons to all graphics

Updated to preface to correctly describe the on-page maths

Fixed the Catmull-Rom section because it had glaring maths errors

August 2019

Added a section on (plain) rational Bezier curves

Improved the Graphic component to allow for sliders

December 2018

Added a section on curvature and calculating kappa.

-

Added a Patreon page! Head on over to patreon.com/bezierinfo to help support this site!

August 2018

Added a section on finding a curve's y, if all you have is the x coordinate.

July 2018

Rewrote the 3D normals section, implementing and explaining Rotation Minimising Frames.

Updated the section on curve order raising/lowering, showing how to get a least-squares optimized lower order curve.

-

(Finally) updated 'npm test' so that it automatically rebuilds when files are changed while the dev server is running.

June 2018

Added a section on direct curve fitting.

Added source links for all graphics.

Added this "What's new?" section.

April 2017

Added a section on 3d normals.

Added live-updating for the social link buttons, so they always link to the specific section you're reading.

February 2017

Finished rewriting the entire codebase for localization.

January 2016

Added a section to explain the Bezier interval.

Rewrote the Primer as a React application.

December 2015

Set up the split repository between BezierInfo-2 as development repository, and bezierinfo as live page.

-

Removed the need for client-side LaTeX parsing entirely, so the site doesn't take a full minute or more to load all the graphics.

May 2015

Switched over to pure JS rather than Processing-through-Processing.js

Added Cardano's algorithm for finding the roots of a cubic polynomial.

April 2015

Added a section on arc length approximations.

February 2015

Added a section on the canonical cubic Bezier form.

November 2014

Switched to HTTPS.

July 2014

Added the section on arc approximation.

April 2014

Added the section on Catmull-Rom fitting.

November 2013

Added the section on Catmull-Rom / Bezier conversion.

Added the section on Bezier cuves as matrices.

April 2013

Added a section on poly-Beziers.

Added a section on boolean shape operations.

March 2013

First drastic rewrite.

Added sections on circle approximations.

Added a section on projecting a point onto a curve.

Added a section on tangents and normals.

Added Legendre-Gauss numerical data tables.

October 2011

-

First commit for the bezierinfo site, based on the pre-Primer webpage that covered the basics of Bezier curves in HTML with Processing.js examples.

A lightning introduction

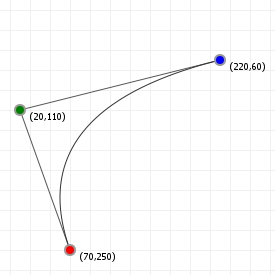

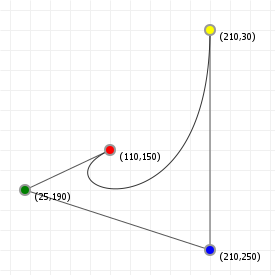

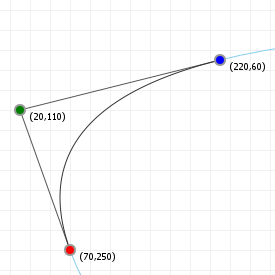

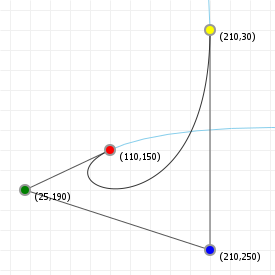

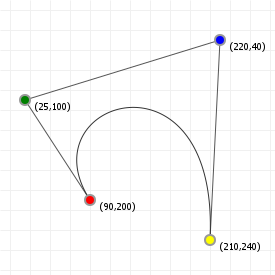

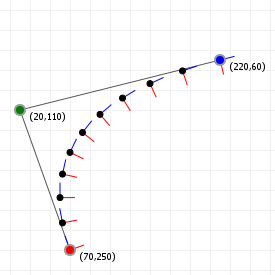

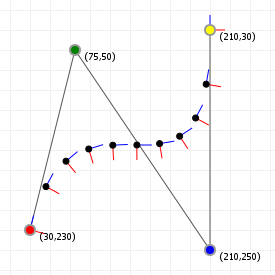

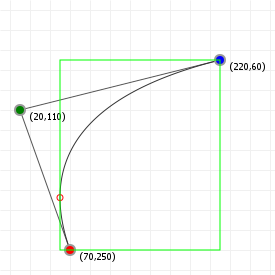

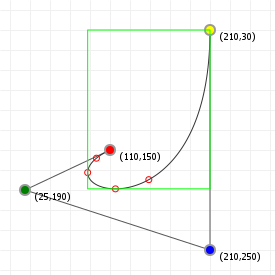

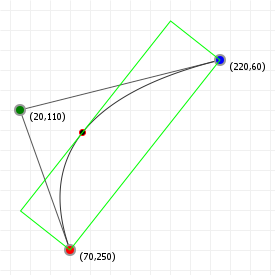

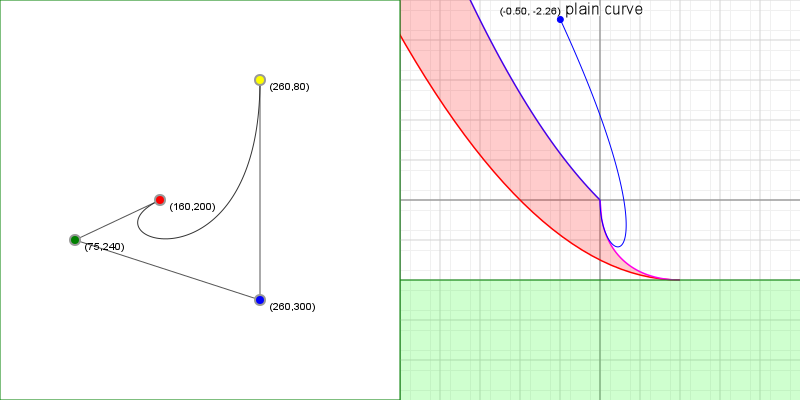

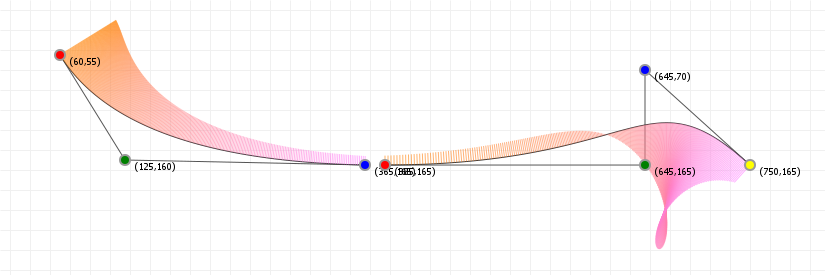

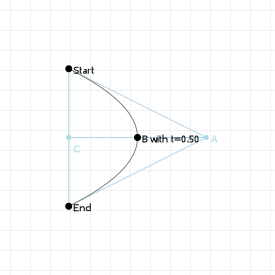

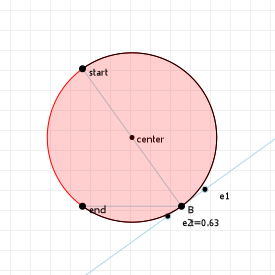

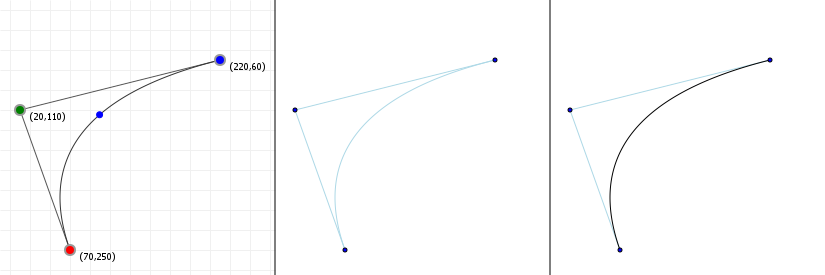

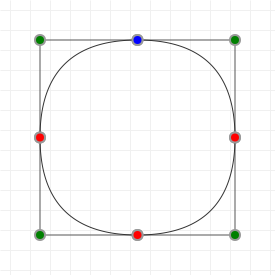

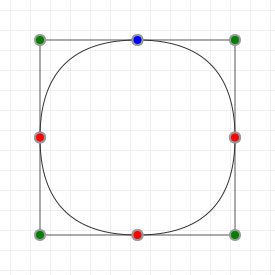

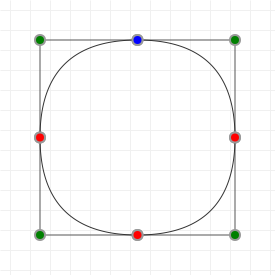

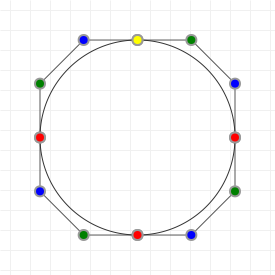

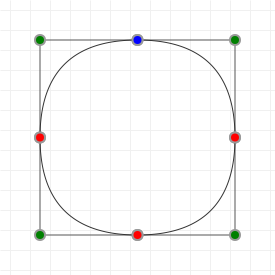

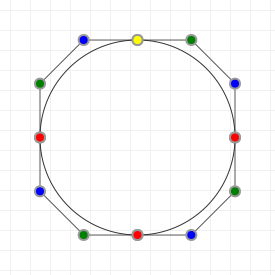

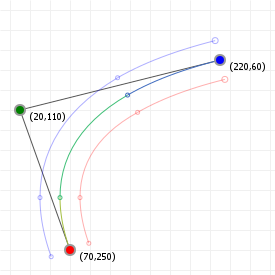

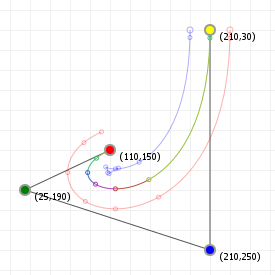

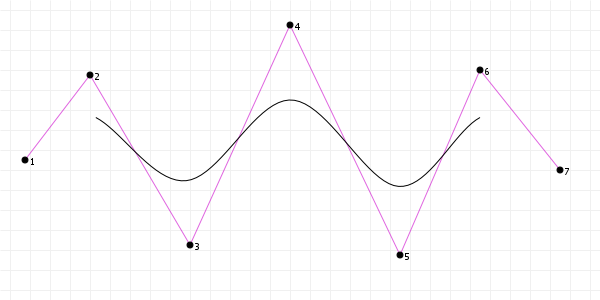

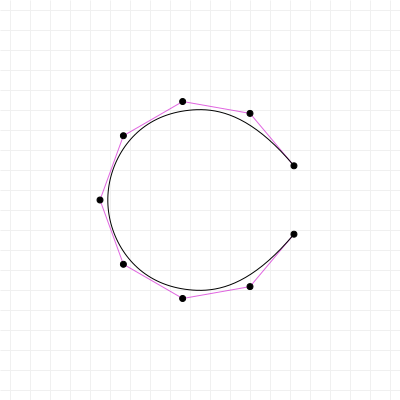

Let's start with the good stuff: when we're talking about Bézier curves, we're talking about the things that you can see in the following graphics. They run from some start point to some end point, with their curvature influenced by one or more "intermediate" control points. Now, because all the graphics on this page are interactive, go manipulate those curves a bit: click-drag the points, and see how their shape changes based on what you do.

These curves are used a lot in computer aided design and computer aided manufacturing (CAD/CAM) applications, as well as in graphic design programs like Adobe Illustrator and Photoshop, Inkscape, GIMP, etc. and in graphic technologies like scalable vector graphics (SVG) and OpenType fonts (TTF/OTF). A lot of things use Bézier curves, so if you want to learn more about them... prepare to get your learn on!

So what makes a Bézier Curve?

Playing with the points for curves may have given you a feel for how Bézier curves behave, but what are Bézier curves, really? There are two ways to explain what a Bézier curve is, and they turn out to be the entirely equivalent, but one of them uses complicated maths, and the other uses really simple maths. So... let's start with the simple explanation:

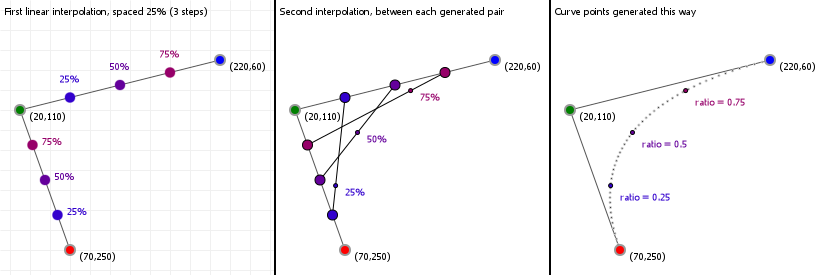

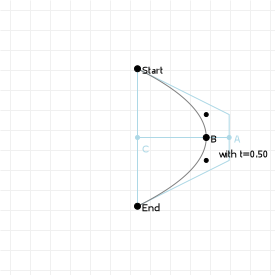

Bézier curves are the result of linear interpolations. That sounds complicated but you've been doing linear interpolation since you were very young: any time you had to point at something between two other things, you've been applying linear interpolation. It's simply "picking a point between two points".

If we know the distance between those two points, and we want a new point that is, say, 20% the distance away from the first point (and thus 80% the distance away from the second point) then we can compute that really easily:

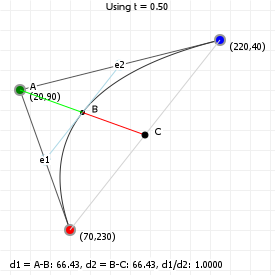

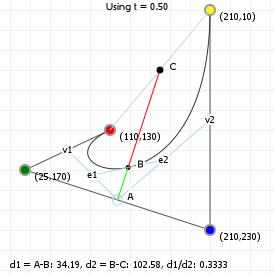

So let's look at that in action: the following graphic is interactive in that you can use your up and down arrow keys to increase or decrease the interpolation ratio, to see what happens. We start with three points, which gives us two lines. Linear interpolation over those lines gives us two points, between which we can again perform linear interpolation, yielding a single point. And that point —and all points we can form in this way for all ratios taken together— form our Bézier curve:

And that brings us to the complicated maths: calculus.

While it doesn't look like that's what we've just done, we actually just drew a quadratic curve, in steps, rather than in a single go. One of the fascinating parts about Bézier curves is that they can both be described in terms of polynomial functions, as well as in terms of very simple interpolations of interpolations of [...]. That, in turn, means we can look at what these curves can do based on both "real maths" (by examining the functions, their derivatives, and all that stuff), as well as by looking at the "mechanical" composition (which tells us, for instance, that a curve will never extend beyond the points we used to construct it).

So let's start looking at Bézier curves a bit more in depth: their mathematical expressions, the properties we can derive from them, and the various things we can do to, and with, Bézier curves.

The mathematics of Bézier curves

Bézier curves are a form of "parametric" function. Mathematically speaking, parametric functions are cheats: a "function" is actually a well defined term representing a mapping from any number of inputs to a single output. Numbers go in, a single number comes out. Change the numbers that go in, and the number that comes out is still a single number.

Parametric functions cheat. They basically say "alright, well, we want multiple values coming out, so we'll just use more than one function". An illustration: Let's say we have a function that maps some value, let's call it x, to some other value, using some kind of number manipulation:

The notation f(x) is the standard way to show that it's a function (by convention called f if we're only listing one) and its output changes based on one variable (in this case, x). Change x, and the output for f(x) changes.

So far, so good. Now, let's look at parametric functions, and how they cheat. Let's take the following two functions:

There's nothing really remarkable about them, they're just a sine and cosine function, but you'll notice the inputs have different names. If we change the value for a, we're not going to change the output value for f(b), since a isn't used in that function. Parametric functions cheat by changing that. In a parametric function all the different functions share a variable, like this:

Multiple functions, but only one variable. If we change the value for t, we change the outcome of both fa(t) and fb(t). You might wonder how that's useful, and the answer is actually pretty simple: if we change the labels fa(t) and fb(t) with what we usually mean with them for parametric curves, things might be a lot more obvious:

There we go. x/y coordinates, linked through some mystery value t.

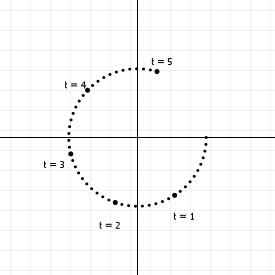

So, parametric curves don't define a y coordinate in terms of an x coordinate, like normal functions do, but they instead link the values to a "control" variable. If we vary the value of t, then with every change we get two values, which we can use as (x,y) coordinates in a graph. The above set of functions, for instance, generates points on a circle: We can range t from negative to positive infinity, and the resulting (x,y) coordinates will always lie on a circle with radius 1 around the origin (0,0). If we plot it for t from 0 to 5, we get this:

Bézier curves are just one out of the many classes of parametric functions, and are characterised by using the same base function for all of the output values. In the example we saw above, the x and y values were generated by different functions (one uses a sine, the other a cosine); but Bézier curves use the "binomial polynomial" for both the x and y outputs. So what are binomial polynomials?

You may remember polynomials from high school. They're those sums that look like this:

If the highest order term they have is x³, they're called "cubic" polynomials; if it's x², it's a "square" polynomial; if it's just x, it's a line (and if there aren't even any terms with x it's not a polynomial!)

Bézier curves are polynomials of t, rather than x, with the value for t being fixed between 0 and 1, with coefficients a, b etc. taking the "binomial" form, which sounds fancy but is actually a pretty simple description for mixing values:

I know what you're thinking: that doesn't look too simple! But if we remove t and add in "times one", things suddenly look pretty easy. Check out these binomial terms:

Notice that 2 is the same as 1+1, and 3 is 2+1 and 1+2, and 6 is 3+3... As you can see, each time we go up a dimension, we simply start and end with 1, and everything in between is just "the two numbers above it, added together", giving us a simple number sequence known as Pascal's triangle. Now that's easy to remember.

There's an equally simple way to figure out how the polynomial terms work: if we rename (1-t) to a and t to b, and remove the weights for a moment, we get this:

It's basically just a sum of "every combination of a and b", progressively replacing a's with b's after every + sign. So that's actually pretty simple too. So now you know binomial polynomials, and just for completeness I'm going to show you the generic function for this:

And that's the full description for Bézier curves. Σ in this function indicates that this is a series of additions (using the variable listed below the Σ, starting at ...=<value> and ending at the value listed on top of the Σ).

How to implement the basis function

We could naively implement the basis function as a mathematical construct, using the function as our guide, like this:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 |

I say we could, because we're not going to: the factorial function is incredibly expensive. And, as we can see from the above explanation, we can actually create Pascal's triangle quite easily without it: just start at [1], then [1,1], then [1,2,1], then [1,3,3,1], and so on, with each next row fitting 1 more number than the previous row, starting and ending with "1", with all the numbers in between being the sum of the previous row's elements on either side "above" the one we're computing.

We can generate this as a list of lists lightning fast, and then never have to compute the binomial terms because we have a lookup table:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 | |

| 17 | |

| 18 |

So what's going on here? First, we declare a lookup table with a size that's reasonably large enough to accommodate most lookups. Then, we declare a function to get us the values we need, and we make sure that if an n/k pair is requested that isn't in the LUT yet, we expand it first. Our basis function now looks like this:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 |

Perfect. Of course, we can optimize further. For most computer graphics purposes, we don't need arbitrary curves (although we will also provide code for arbitrary curves in this primer); we need quadratic and cubic curves, and that means we can drastically simplify the code:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 |

And now we know how to program the basis function. Excellent.

So, now we know what the basis function looks like, time to add in the magic that makes Bézier curves so special: control points.

Controlling Bézier curvatures

Bézier curves are, like all "splines", interpolation functions. This means that they take a set of points, and generate values somewhere "between" those points. (One of the consequences of this is that you'll never be able to generate a point that lies outside the outline for the control points, commonly called the "hull" for the curve. Useful information!). In fact, we can visualize how each point contributes to the value generated by the function, so we can see which points are important, where, in the curve.

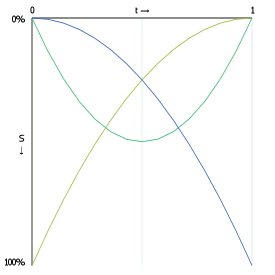

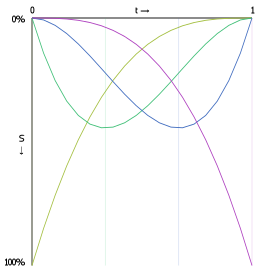

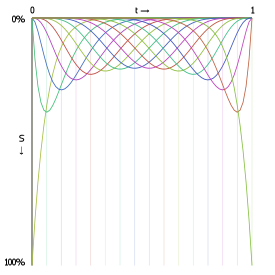

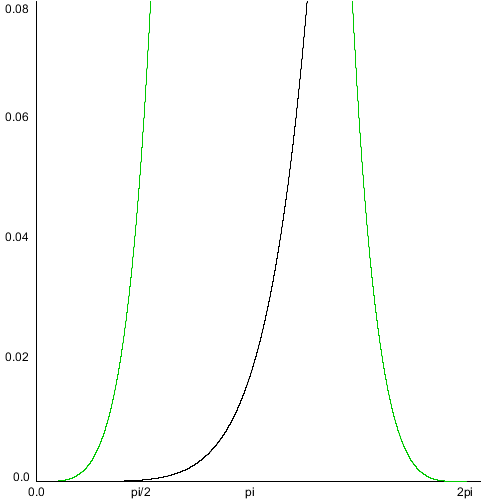

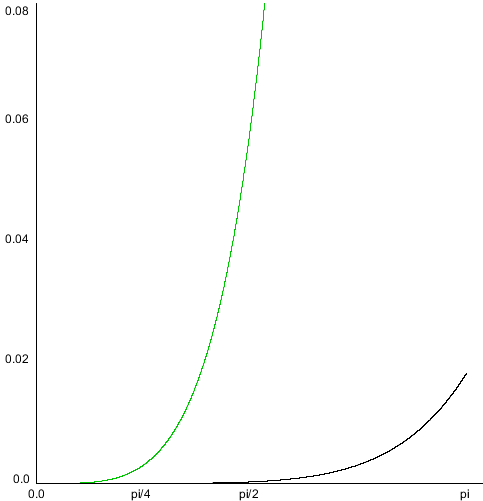

The following graphs show the interpolation functions for quadratic and cubic curves, with "S" being the strength of a point's contribution to the total sum of the Bézier function. Click-and-drag to see the interpolation percentages for each curve-defining point at a specific t value.

Also shown is the interpolation function for a 15th order Bézier function. As you can see, the start and end point contribute considerably more to the curve's shape than any other point in the control point set.

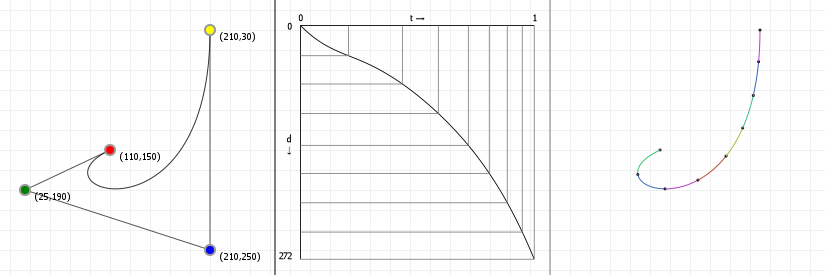

If we want to change the curve, we need to change the weights of each point, effectively changing the interpolations. The way to do this is about as straightforward as possible: just multiply each point with a value that changes its strength. These values are conventionally called "weights", and we can add them to our original Bézier function:

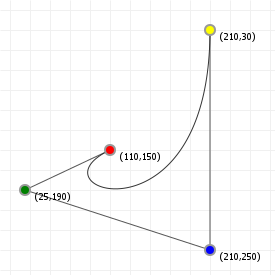

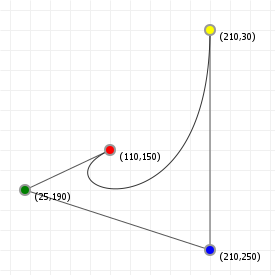

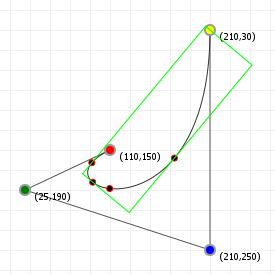

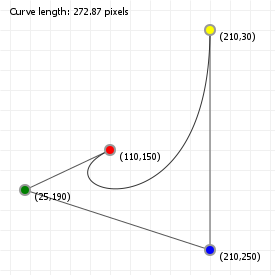

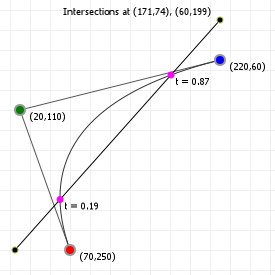

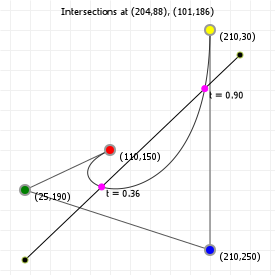

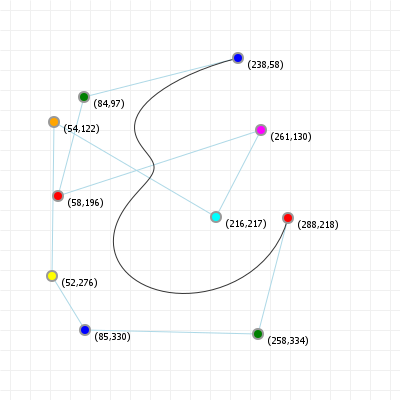

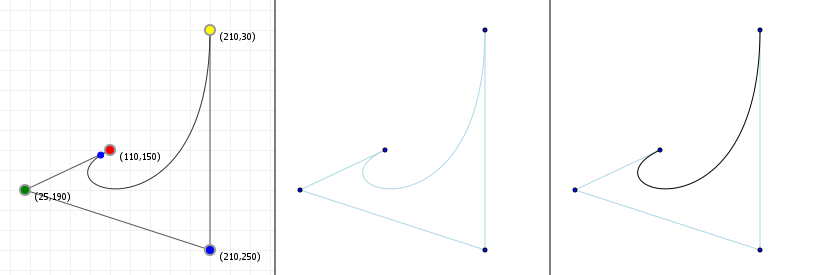

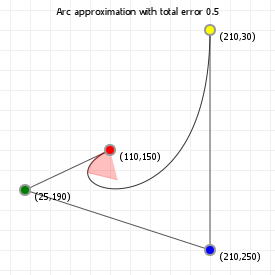

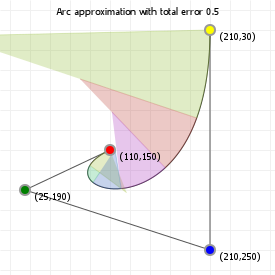

That looks complicated, but as it so happens, the "weights" are actually just the coordinate values we want our curve to have: for an nth order curve, w0 is our start coordinate, wn is our last coordinate, and everything in between is a controlling coordinate. Say we want a cubic curve that starts at (110,150), is controlled by (25,190) and (210,250) and ends at (210,30), we use this Bézier curve:

Which gives us the curve we saw at the top of the article:

What else can we do with Bézier curves? Quite a lot, actually. The rest of this article covers a multitude of possible operations and algorithms that we can apply, and the tasks they achieve.

How to implement the weighted basis function

Given that we already know how to implement basis function, adding in the control points is remarkably easy:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 |

And now for the optimized versions:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 |

And now we know how to program the weighted basis function.

Controlling Bézier curvatures, part 2: Rational Béziers

We can further control Bézier curves by "rationalising" them: that is, adding a "ratio" value in addition to the weight value discussed in the previous section, thereby gaining control over "how strongly" each coordinate influences the curve.

Adding these ratio values to the regular Bézier curve function is fairly easy. Where the regular function is the following:

The function for rational Bézier curves has two more terms:

In this, the first new term represents an additional weight for each coordinate. For example, if our ratio values are [1, 0.5, 0.5, 1]

then ratio0 = 1, ratio1 = 0.5, and so on, and is effectively identical as if we were just

using different weight. So far, nothing too special.

However, the second new term is what makes the difference: every point on the curve isn't just a "double weighted" point, it is a fraction of the "doubly weighted" value we compute by introducing that ratio. When computing points on the curve, we compute the "normal" Bézier value and then divide that by the Bézier value for the curve that only uses ratios, not weights.

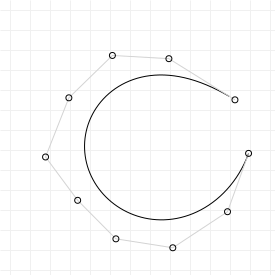

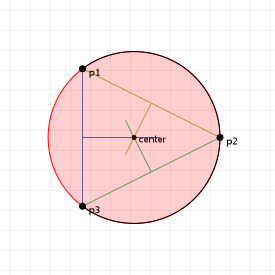

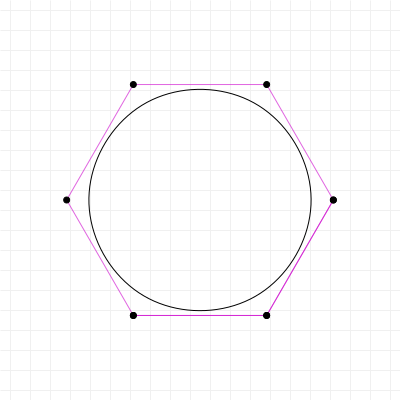

This does something unexpected: it turns our polynomial into something that isn't a polynomial anymore. It is now a kind of curve that is a super class of the polynomials, and can do some really cool things that Bézier curves can't do "on their own", such as perfectly describing circles (which we'll see in a later section is literally impossible using standard Bézier curves).

But the best way to show what this does is to do literally that: let's look at the effect of "rationalising" our Bézier curves using an interactive graphic for a rationalised curves. The following graphic shows the Bézier curve from the previous section, "enriched" with ratio factors for each coordinate. The closer to zero we set one or more terms, the less relative influence the associated coordinate exerts on the curve (and of course the higher we set them, the more influence they have). Try to change the values and see how it affects what gets drawn:

You can think of the ratio values as each coordinate's "gravity": the higher the gravity, the closer to that coordinate the curve will want to be. You'll also notice that if you simply increase or decrease all the ratios by the same amount, nothing changes... much like with gravity, if the relative strengths stay the same, nothing really changes. The values define each coordinate's influence relative to all other points.

How to implement rational curves

Extending the code of the previous section to include ratios is almost trivial:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 | |

| 17 | |

| 18 | |

| 19 | |

| 20 | |

| 21 | |

| 22 | |

| 23 | |

| 24 | |

| 25 | |

| 26 |

And that's all we have to do.

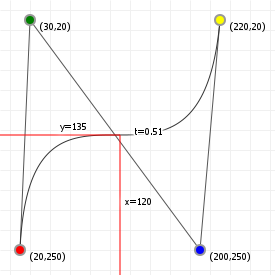

The Bézier interval [0,1]

Now that we know the mathematics behind Bézier curves, there's one curious thing that you may have noticed: they always run from

t=0 to t=1. Why that particular interval?

It all has to do with how we run from "the start" of our curve to "the end" of our curve. If we have a value that is a mixture of two other values, then the general formula for this is:

The obvious start and end values here need to be a=1, b=0, so that the mixed value is 100% value 1, and 0% value 2, and

a=0, b=1, so that the mixed value is 0% value 1 and 100% value 2. Additionally, we don't want "a" and "b" to be independent:

if they are, then we could just pick whatever values we like, and end up with a mixed value that is, for example, 100% value 1

and 100% value 2. In principle that's fine, but for Bézier curves we always want mixed values between the start

and end point, so we need to make sure we can never set "a" and "b" to some values that lead to a mix value that sums to more than 100%.

And that's easy:

With this we can guarantee that we never sum above 100%. By restricting a to values in the interval [0,1], we will always be

somewhere between our two values (inclusively), and we will always sum to a 100% mix.

But... what if we use this form, which is based on the assumption that we will only ever use values between 0 and 1, and instead use values outside of that interval? Do things go horribly wrong? Well... not really, but we get to "see more".

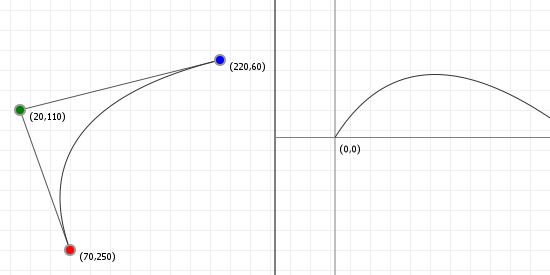

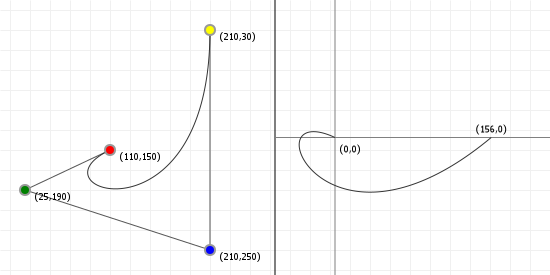

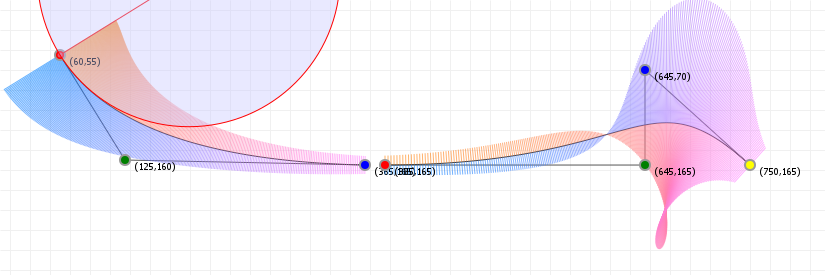

In the case of Bézier curves, extending the interval simply makes our curve "keep going". Bézier curves are simply segments of some polynomial curve, so if we pick a wider interval we simply get to see more of the curve. So what do they look like?

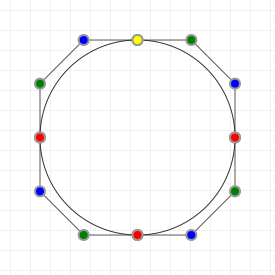

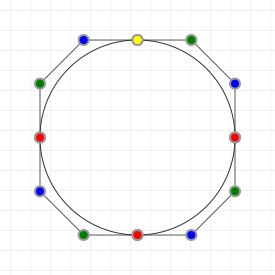

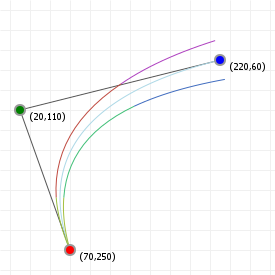

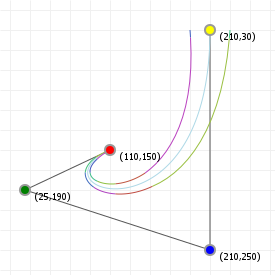

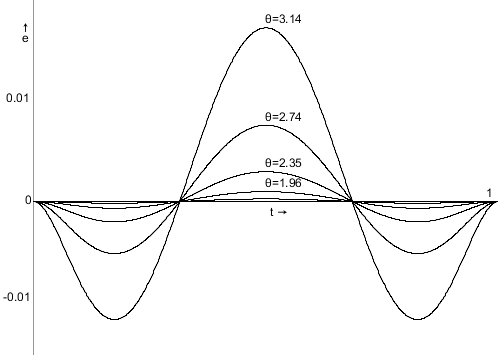

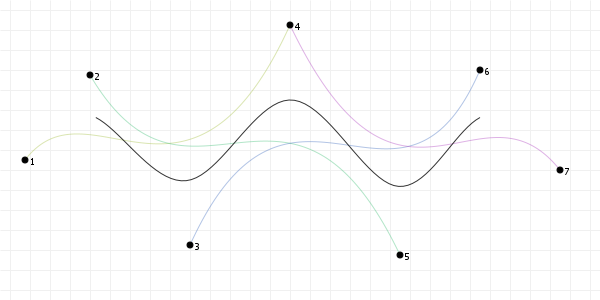

The following two graphics show you Bézier curves rendered "the usual way", as well as the curves they "lie on" if we were to extend the

t values much further. As you can see, there's a lot more "shape" hidden in the rest of the curve, and we can model those

parts by moving the curve points around.

In fact, there are curves used in graphics design and computer modelling that do the opposite of Bézier curves; rather than fixing the interval, and giving you freedom to choose the coordinates, they fix the coordinates, but give you freedom over the interval. A great example of this is the "Spiro" curve, which is a curve based on part of a Cornu Spiral, also known as Euler's Spiral. It's a very aesthetically pleasing curve and you'll find it in quite a few graphics packages like FontForge and Inkscape. It has even been used in font design, for example for the Inconsolata typeface.

Bézier curvatures as matrix operations

We can also represent Bézier curves as matrix operations, by expressing the Bézier formula as a polynomial basis function and a coefficients matrix, and the actual coordinates as a matrix. Let's look at what this means for the cubic curve, using P... to refer to coordinate values "in one or more dimensions":

Disregarding our actual coordinates for a moment, we have:

We can write this as a sum of four expressions:

And we can expand these expressions:

Furthermore, we can make all the 1 and 0 factors explicit:

And that, we can view as a series of four matrix operations:

If we compact this into a single matrix operation, we get:

This kind of polynomial basis representation is generally written with the bases in increasing order, which means we need to flip our

t matrix horizontally, and our big "mixing" matrix upside down:

And then finally, we can add in our original coordinates as a single third matrix:

We can perform the same trick for the quadratic curve, in which case we end up with:

If we plug in a t value, and then multiply the matrices, we will get exactly the same values as when we evaluate the original

polynomial function, or as when we evaluate the curve using progressive linear interpolation.

So: why would we bother with matrices? Matrix representations allow us to discover things about functions that would otherwise be hard to tell. It turns out that the curves form triangular matrices, and they have a determinant equal to the product of the actual coordinates we use for our curve. It's also invertible, which means there's a ton of properties that are all satisfied. Of course, the main question is "why is this useful to us now?", and the answer to that is that it's not immediately useful, but you'll be seeing some instances where certain curve properties can be either computed via function manipulation, or via clever use of matrices, and sometimes the matrix approach can be (drastically) faster.

So for now, just remember that we can represent curves this way, and let's move on.

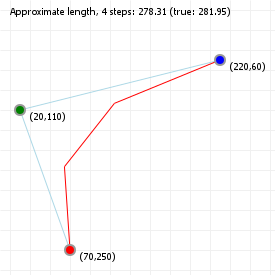

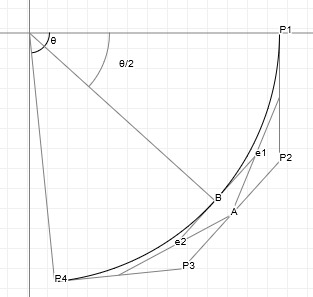

de Casteljau's algorithm

If we want to draw Bézier curves, we can run through all values of t from 0 to 1 and then compute the weighted basis function

at each value, getting the x/y values we need to plot. Unfortunately, the more complex the curve gets, the more expensive

this computation becomes. Instead, we can use de Casteljau's algorithm to draw curves. This is a geometric approach to curve

drawing, and it's really easy to implement. So easy, in fact, you can do it by hand with a pencil and ruler.

Rather than using our calculus function to find x/y values for t, let's do this instead:

- treat

tas a ratio (which it is). t=0 is 0% along a line, t=1 is 100% along a line. - Take all lines between the curve's defining points. For an order

ncurve, that'snlines. -

Place markers along each of these line, at distance

t. So iftis 0.2, place the mark at 20% from the start, 80% from the end. - Now form lines between

thosepoints. This givesn-1lines. - Place markers along each of these line at distance

t. - Form lines between

thosepoints. This'll ben-2lines. - Place markers, form lines, place markers, etc.

-

Repeat this until you have only one line left. The point

ton that line coincides with the original curve point att.

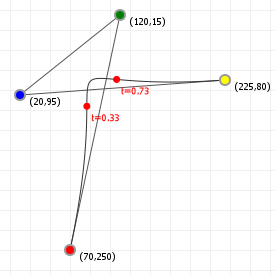

To see this in action, move the slider for the following sketch to changes which curve point is explicitly evaluated using de Casteljau's algorithm.

How to implement de Casteljau's algorithm

Let's just use the algorithm we just specified, and implement that as a function that can take a list of curve-defining points, and a

t value, and draws the associated point on the curve for that t value:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 |

And done, that's the algorithm implemented. Although: usually you don't get the luxury of overloading the "+" operator, so let's also

give the code for when you need to work with x and y values separately:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 |

So what does this do? This draws a point, if the passed list of points is only 1 point long. Otherwise it will create a new list of points that sit at the t ratios (i.e. the "markers" outlined in the above algorithm), and then call the draw function for this new list.

Simplified drawing

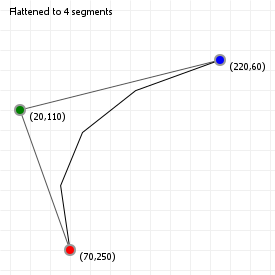

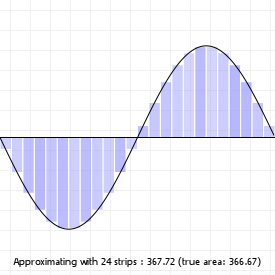

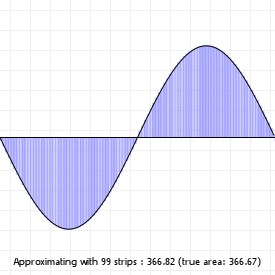

We can also simplify the drawing process by "sampling" the curve at certain points, and then joining those points up with straight lines, a process known as "flattening", as we are reducing a curve to a simple sequence of straight, "flat" lines.

We can do this is by saying "we want X segments", and then sampling the curve at intervals that are spaced such that we end up with the number of segments we wanted. The advantage of this method is that it's fast: instead of evaluating 100 or even 1000 curve coordinates, we can sample a much lower number and still end up with a curve that sort-of-kind-of looks good enough. The disadvantage of course is that we lose the precision of working with "the real curve", so we usually can't use the flattened for doing true intersection detection, or curvature alignment.

Try clicking on the sketch and using your up and down arrow keys to lower the number of segments for both the quadratic and cubic curve. You'll notice that for certain curvatures, a low number of segments works quite well, but for more complex curvatures (try this for the cubic curve), a higher number is required to capture the curvature changes properly.

How to implement curve flattening

Let's just use the algorithm we just specified, and implement that:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 |

And done, that's the algorithm implemented. That just leaves drawing the resulting "curve" as a sequence of lines:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 |

We start with the first coordinate as reference point, and then just draw lines between each point and its next point.

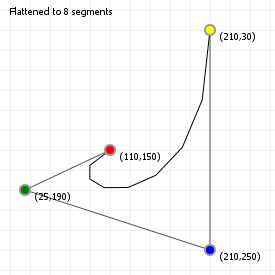

Splitting curves

Using de Casteljau's algorithm, we can also find all the points we need to split up a Bézier curve into two, smaller curves, which taken

together form the original curve. When we construct de Casteljau's skeleton for some value t, the procedure gives us all the

points we need to split a curve at that t value: one curve is defined by all the inside skeleton points found prior to our

on-curve point, with the other curve being defined by all the inside skeleton points after our on-curve point.

implementing curve splitting

We can implement curve splitting by bolting some extra logging onto the de Casteljau function:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 |

After running this function for some value t, the left and right arrays will contain all the

coordinates for two new curves - one to the "left" of our t value, the other on the "right". These new curves will have the

same order as the original curve, and can be overlaid exactly on the original curve.

Splitting curves using matrices

Another way to split curves is to exploit the matrix representation of a Bézier curve. In the section on matrices, we saw that we can represent curves as matrix multiplications. Specifically, we saw these two forms for the quadratic and cubic curves respectively: (we'll reverse the Bézier coefficients vector for legibility)

and

Let's say we want to split the curve at some point t = z, forming two new (obviously smaller) Bézier curves. To find the

coordinates for these two Bézier curves, we can use the matrix representation and some linear algebra. First, we separate out the actual

"point on the curve" information into a new matrix multiplication:

and

If we could compact these matrices back to the form [t values] · [Bézier matrix] · [column matrix], with the first two

staying the same, then that column matrix on the right would be the coordinates of a new Bézier curve that describes the first segment,

from t = 0 to t = z. As it turns out, we can do this quite easily, by exploiting some simple rules of linear

algebra (and if you don't care about the derivations, just skip to the end of the box for the results!).

Deriving new hull coordinates

Deriving the two segments upon splitting a curve takes a few steps, and the higher the curve order, the more work it is, so let's look at the quadratic curve first:

We can do this because [M · M-1] is the identity matrix. It's a bit like multiplying something by x/x in calculus: it doesn't do anything to the function, but it does allow you to rewrite it to something that may be easier to work with, or can be broken up differently. In the same way, multiplying our matrix by [M · M-1] has no effect on the total formula, but it does allow us to change the matrix sequence [something · M] to a sequence [M · something], and that makes a world of difference: if we know what [M-1 · Z · M] is, we can apply that to our coordinates, and be left with a proper matrix representation of a quadratic Bézier curve (which is [T · M · P]), with a new set of coordinates that represent the curve from t = 0 to t = z. So let's get computing:

Excellent! Now we can form our new quadratic curve:

Brilliant: if we want a subcurve from t = 0 to t = z, we can keep the first coordinate the

same (which makes sense), our control point becomes a z-ratio mixture of the original control point and the start point, and the new end

point is a mixture that looks oddly similar to a

Bernstein polynomial of degree two. These new coordinates are actually

really easy to compute directly!

Of course, that's only one of the two curves. Getting the section from t = z to t = 1 requires doing this

again. We first observe that in the previous calculation, we actually evaluated the general interval [0,z]. We were able to

write it down in a more simple form because of the zero, but what we actually evaluated, making the zero explicit, was:

If we want the interval [z,1], we will be evaluating this instead:

We're going to do the same trick of multiplying by the identity matrix, to turn [something · M] into

[M · something]:

So, our final second curve looks like:

Nice. We see the same thing as before: we can keep the last coordinate the same (which makes sense); our control point becomes a z-ratio mixture of the original control point and the end point, and the new start point is a mixture that looks oddly similar to a bernstein polynomial of degree two, except this time it uses (z-1) rather than (1-z). These new coordinates are also really easy to compute directly!

So, using linear algebra rather than de Casteljau's algorithm, we have determined that, for any quadratic curve split at some value

t = z, we get two subcurves that are described as Bézier curves with simple-to-derive coordinates:

and

We can do the same for cubic curves. However, I'll spare you the actual derivation (don't let that stop you from writing that out yourself, though) and simply show you the resulting new coordinate sets:

and

So, looking at our matrices, did we really need to compute the second segment matrix? No, we didn't. Actually having one segment's matrix means we implicitly have the other: push the values of each row in the matrix Q to the right, with zeroes getting pushed off the right edge and appearing back on the left, and then flip the matrix vertically. Presto, you just "calculated" Q'.

Implementing curve splitting this way requires less recursion, and is just straight arithmetic with cached values, so can be cheaper on systems where recursion is expensive. If you're doing computation with devices that are good at matrix multiplication, chopping up a Bézier curve with this method will be a lot faster than applying de Casteljau.

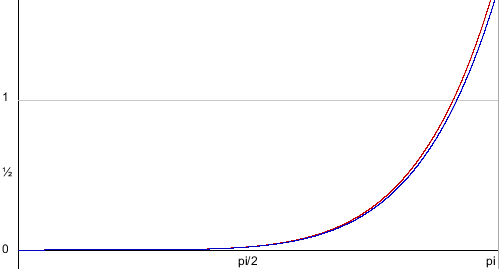

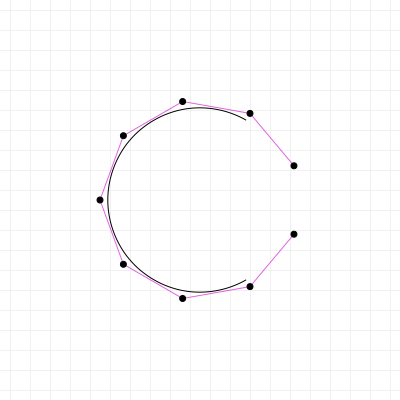

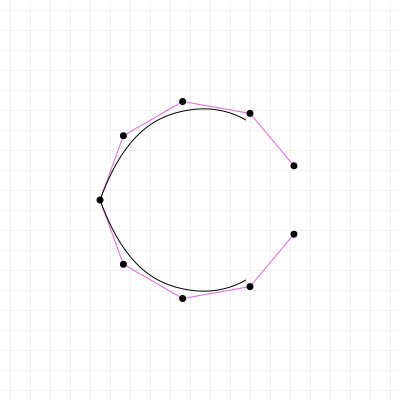

Lowering and elevating curve order

One interesting property of Bézier curves is that an nth order curve can always be perfectly represented by an (n+1)th order curve, by giving the higher-order curve specific control points.

If we have a curve with three points, then we can create a curve with four points that exactly reproduces the original curve. First, we give it the same start and end points, and for its two control points we pick "1/3rd start + 2/3rd control" and "2/3rd control + 1/3rd end". Now we have exactly the same curve as before, except represented as a cubic curve rather than a quadratic curve.

The general rule for raising an nth order curve to an (n+1)th order curve is as follows (observing that the start and end weights are the same as the start and end weights for the old curve):

However, this rule also has as direct consequence that you cannot generally safely lower a curve from nth order to (n-1)th order, because the control points cannot be "pulled apart" cleanly. We can try to, but the resulting curve will not be identical to the original, and may in fact look completely different.

However, there is a surprisingly good way to ensure that a lower order curve looks "as close as reasonably possible" to the original curve: we can optimise the "least-squares distance" between the original curve and the lower order curve, in a single operation (also explained over on Sirver's Castle). However, to use it, we'll need to do some calculus work and then switch over to linear algebra. As mentioned in the section on matrix representations, some things can be done much more easily with matrices than with calculus functions, and this is one of those things. So... let's go!

We start by taking the standard Bézier function, and condensing it a little:

Then, we apply one of those silly (actually, super useful) calculus tricks: since our t value is always between zero and one

(inclusive), we know that (1-t) plus t always sums to 1. As such, we can express any value as a sum of

t and 1-t:

So, with that seemingly trivial observation, we rewrite that Bézier function by splitting it up into a sum of a (1-t) and

t component:

So far so good. Now, to see why we did this, let's write out the (1-t) and t parts, and see what that gives us.

I promise, it's about to make sense. We start with (1-t):

So by using this seemingly silly trick, we can suddenly express part of our nth order Bézier function in terms of an (n+1)th

order Bézier function. And that sounds a lot like raising the curve order! Of course we need to be able to repeat that trick for the

t part, but that's not a problem:

So, with both of those changed from an order n expression to an order (n+1) expression, we can put them back

together again. Now, where the order n function had a summation from 0 to n, the order n+1 function

uses a summation from 0 to n+1, but this shouldn't be a problem as long as we can add some new terms that "contribute

nothing". In the next section on derivatives, there is a discussion about why "higher terms than there is a binomial for" and "lower than

zero terms" both "contribute nothing". So as long as we can add terms that have the same form as the terms we need, we can just include

them in the summation, they'll sit there and do nothing, and the resulting function stays identical to the lower order curve.

Let's do this:

And this is where we switch over from calculus to linear algebra, and matrices: we can now express this relation between Bézier(n,t) and Bézier(n+1,t) as a very simple matrix multiplication:

where the matrix M is an n+1 by n matrix, and looks like:

That might look unwieldy, but it's really just a mostly-zeroes matrix, with a very simply fraction on the diagonal, and an even simpler fraction to the left of it. Multiplying a list of coordinates with this matrix means we can plug the resulting transformed coordinates into the one-order-higher function and get an identical looking curve.

Not too bad!

Equally interesting, though, is that with this matrix operation established, we can now use an incredibly powerful and ridiculously simple way to find out a "best fit" way to reverse the operation, called the normal equation. What it does is minimize the sum of the square differences between one set of values and another set of values. Specifically, if we can express that as some function A x = b, we can use it. And as it so happens, that's exactly what we're dealing with, so:

The steps taken here are:

- We have a function in a form that the normal equation can be used with, so

- apply the normal equation!

- Then, we want to end up with just Bn on the left, so we start by left-multiply both sides such that we'll end up with lots of stuff on the left that simplified to "a factor 1", which in matrix maths is the identity matrix.

- In fact, by left-multiplying with the inverse of what was already there, we've effectively "nullified" (but really, one-inified) that big, unwieldy block into the identity matrix I. So we substitute the mess with I, and then

- because multiplication with the identity matrix does nothing (like multiplying by 1 does nothing in regular algebra), we just drop it.

And we're done: we now have an expression that lets us approximate an n+1th order curve with a lower nth order curve. It won't be an exact fit, but it's definitely a best approximation. So, let's implement these rules for

raising and lowering curve order to a (semi) random curve, using the following graphic. Select the sketch, which has movable control

points, and press your up and down arrow keys to raise or lower the curve order.

Derivatives

There's a number of useful things that you can do with Bézier curves based on their derivative, and one of the more amusing observations about Bézier curves is that their derivatives are, in fact, also Bézier curves. In fact, the differentiation of a Bézier curve is relatively straightforward, although we do need a bit of math.

First, let's look at the derivative rule for Bézier curves, which is:

which we can also write (observing that b in this formula is the same as our w weights, and that n times a summation is the same as a summation where each term is multiplied by n) as:

Or, in plain text: the derivative of an nth degree Bézier curve is an (n-1)th degree Bézier curve, with one fewer term, and new weights w'0...w'n-1 derived from the original weights as n(wi+1 - wi). So for a 3rd degree curve, with four weights, the derivative has three new weights: w'0 = 3(w1-w0), w'1 = 3(w2-w1) and w'2 = 3(w3-w2).

"Slow down, why is that true?"

Sometimes just being told "this is the derivative" is nice, but you might want to see why this is indeed the case. As such, let's have a look at the proof for this derivative. First off, the weights are independent of the full Bézier function, so the derivative involves only the derivative of the polynomial basis function. So, let's find that:

Applying the product and chain rules gives us:

Which is hard to work with, so let's expand that properly:

Now, the trick is to turn this expression into something that has binomial coefficients again, so we want to end up with things that look like "x! over y!(x-y)!". If we can do that in a way that involves terms of n-1 and k-1, we'll be on the right track.

And that's the first part done: the two components inside the parentheses are actually regular, lower-order Bézier expressions:

Now to apply this to our weighted Bézier curves. We'll write out the plain curve formula that we saw earlier, and then work our way through to its derivative:

If we expand this (with some color to show how terms line up), and reorder the terms by increasing values for k we see the following:

Two of these terms fall way: the first term falls away because there is no -1st term in a summation. As such, it always contributes "nothing", so we can safely completely ignore it for the purpose of finding the derivative function. The other term is the very last term in this expansion: one involving Bn-1,n. This term would have a binomial coefficient of [i choose i+1], which is a non-existent binomial coefficient. Again, this term would contribute "nothing", so we can ignore it, too. This means we're left with:

And that's just a summation of lower order curves:

We can rewrite this as a normal summation, and we're done:

Let's rewrite that in a form similar to our original formula, so we can see the difference. We will first list our original formula for Bézier curves, and then the derivative:

What are the differences? In terms of the actual Bézier curve, virtually nothing! We lowered the order (rather than n, it's now n-1), but it's still the same Bézier function. The only real difference is in how the weights change when we derive the curve's function. If we have four points A, B, C, and D, then the derivative will have three points, the second derivative two, and the third derivative one:

We can keep performing this trick for as long as we have more than one weight. Once we have one weight left, the next step will see k = 0, and the result of our "Bézier function" summation is zero, because we're not adding anything at all. As such, a quadratic curve has no second derivative, a cubic curve has no third derivative, and generalized: an nth order curve has n-1 (meaningful) derivatives, with any further derivative being zero.

Tangents and normals

If you want to move objects along a curve, or "away from" a curve, the two vectors you're most interested in are the tangent vector and normal vector for curve points. These are actually really easy to find. For moving and orienting along a curve, we use the tangent, which indicates the direction of travel at specific points, and is literally just the first derivative of our curve:

This gives us the directional vector we want. We can normalize it to give us uniform directional vectors (having a length of 1.0) at each point, and then do whatever it is we want to do based on those directions:

The tangent is very useful for moving along a line, but what if we want to move away from the curve instead, perpendicular to the curve at some point t? In that case we want the normal vector. This vector runs at a right angle to the direction of the curve, and is typically of length 1.0, so all we have to do is rotate the normalized directional vector and we're done:

Rotating coordinates is actually very easy, if you know the rule for it. You might find it explained as "applying a rotation matrix, which is what we'll look at here, too. Essentially, the idea is to take the circles over which we can rotate, and simply "sliding the coordinates" over these circles by the desired angle. If we want a quarter circle turn, we take the coordinate, slide it along the circle by a quarter turn, and done.

To turn any point (x,y) into a rotated point (x',y') (over 0,0) by some angle φ, we apply this nice and easy computation:

Which is the "long" version of the following matrix transformation:

And that's all we need to rotate any coordinate. Note that for quarter, half, and three-quarter turns these functions become even easier, since sin and cos for these angles are, respectively: 0 and 1, -1 and 0, and 0 and -1.

But why does this work? Why this matrix multiplication? Wikipedia (technically, Thomas Herter and Klaus Lott) tells us that a rotation matrix can be treated as a sequence of three (elementary) shear operations. When we combine this into a single matrix operation (because all matrix multiplications can be collapsed), we get the matrix that you see above. DataGenetics have an excellent article about this very thing: it's really quite cool, and I strongly recommend taking a quick break from this primer to read that article.

The following two graphics show the tangent and normal along a quadratic and cubic curve, with the direction vector coloured blue, and the normal vector coloured red (the markers are spaced out evenly as t-intervals, not spaced equidistant).

Working with 3D normals

Before we move on to the next section we need to spend a little bit of time on the difference between 2D and 3D. While for many things this difference is irrelevant and the procedures are identical (for instance, getting the 3D tangent is just doing what we do for 2D, but for x, y, and z, instead of just for x and y), when it comes to normals things are a little more complex, and thus more work. Mind you, it's not "super hard", but there are more steps involved and we should have a look at those.

Getting normals in 3D is in principle the same as in 2D: we take the normalised tangent vector, and then rotate it by a quarter turn. However, this is where things get that little more complex: we can turn in quite a few directions, since "the normal" in 3D is a plane, not a single vector, so we basically need to define what "the" normal is in the 3D case.

The "naïve" approach is to construct what is known as the Frenet normal, where we follow a simple recipe that works in many cases (but does super bizarre things in some others). The idea is that even though there are infinitely many vectors that are perpendicular to the tangent (i.e. make a 90 degree angle with it), the tangent itself sort of lies on its own plane already: since each point on the curve (no matter how closely spaced) has its own tangent vector, we can say that each point lies in the same plane as the local tangent, as well as the tangents "right next to it".

Even if that difference in tangent vectors is minute, "any difference" is all we need to find out what that plane is - or rather, what the vector perpendicular to that plane is. Which is what we need: if we can calculate that vector, and we have the tangent vector that we know lies on a plane, then we can rotate the tangent vector over the perpendicular, and presto. We have computed the normal using the same logic we used for the 2D case: "just rotate it 90 degrees".

So let's do that! And in a twist surprise, we can do this in four lines:

- a = normalize(B'(t))

- b = normalize(a + B''(t))

- r = normalize(b × a)

- normal = normalize(r × a)

Let's unpack that a little:

- We start by taking the normalized vector for the derivative at some point on the curve. We normalize it so the maths is less work. Less work is good.

- Then, we compute b which represents what a next point's tangent would be if the curve stopped changing at our point and just had the same derivative and second derivative from that point on.

- This lets us find two vectors (the derivative, and the second derivative added to the derivative) that lie on the same plane, which means we can use them to compute a vector perpendicular to that plane, using an elementary vector operation called the cross product. (Note that while that operation uses the × operator, it's most definitely not a multiplication!) The result of that gives us a vector that we can use as the "axis of rotation" for turning the tangent a quarter circle to get our normal, just like we did in the 2D case.

- Since the cross product lets us find a vector that is perpendicular to some plane defined by two other vectors, and since the normal vector should be perpendicular to the plane that the tangent and the axis of rotation lie in, we can use the cross product a second time, and immediately get our normal vector.

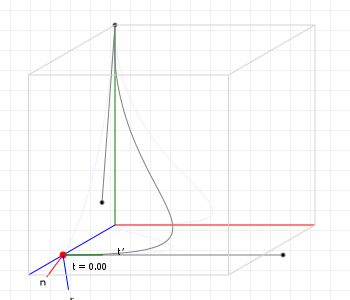

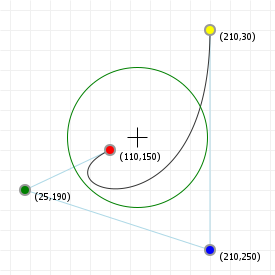

And then we're done, we found "the" normal vector for a 3D curve. Let's see what that looks like for a sample curve, shall we? You can move your cursor across the graphic from left to right, to show the normal at a point with a t value that is based on your cursor position: all the way on the left is 0, all the way on the right = 1, midway is t=0.5, etc:

However, if you've played with that graphic a bit, you might have noticed something odd. The normal seems to "suddenly twist around the curve" between t=0.65 and t=0.75... Why is it doing that?

As it turns out, it's doing that because that's how the maths works, and that's the problem with Frenet normals: while they are "mathematically correct", they are "practically problematic", and so for any kind of graphics work what we really want is a way to compute normals that just... look good.

Thankfully, Frenet normals are not our only option.

Another option is to take a slightly more algorithmic approach and compute a form of Rotation Minimising Frame (also known as "parallel transport frame" or "Bishop frame") instead, where a "frame" is a set made up of the tangent, the rotational axis, and the normal vector, centered on an on-curve point.

These type of frames are computed based on "the previous frame", so we cannot simply compute these "on demand" for single points, as we could for Frenet frames; we have to compute them for the entire curve. Thankfully, the procedure is pretty simple, and can be performed at the same time that you're building lookup tables for your curve.

The idea is to take a starting "tangent/rotation axis/normal" frame at t=0, and then compute what the next frame "should" look like by applying some rules that yield a good looking next frame. In the case of the RMF paper linked above, those rules are:

- Take a point on the curve for which we know the RM frame already,

- take a next point on the curve for which we don't know the RM frame yet, and

- reflect the known frame onto the next point, by treating the plane through the curve at the point exactly between the next and previous points as a "mirror".

- This gives the next point a tangent vector that's essentially pointing in the opposite direction of what it should be, and a normal that's slightly off-kilter, so:

- reflect the vectors of our "mirrored frame" a second time, but this time using the plane through the "next point" itself as "mirror".

- Done: the tangent and normal have been fixed, and we have a good looking frame to work with.

So, let's write some code for that!

Implementing Rotation Minimising Frames

We first assume we have a function for calculating the Frenet frame at a point, which we already discussed above, inn a way that it yields a frame with properties:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 |

Then, we can write a function that generates a sequence of RM frames in the following manner:

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 | |

| 17 | |

| 18 | |

| 19 | |

| 20 | |

| 21 | |

| 22 | |

| 23 | |

| 24 | |

| 25 | |

| 26 | |

| 27 | |

| 28 | |

| 29 | |

| 30 | |

| 31 | |

| 32 | |

| 33 | |

| 34 | |

| 35 | |

| 36 |

Ignoring comments, this is certainly more code than when we were just computing a single Frenet frame, but it's not a crazy amount more code to get much better looking normals.

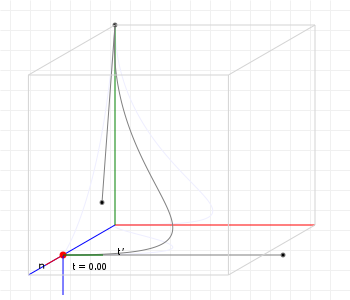

Speaking of better looking, what does this actually look like? Let's revisit that earlier curve, but this time use rotation minimising frames rather than Frenet frames:

That looks so much better!

For those reading along with the code: we don't even strictly speaking need a Frenet frame to start with: we could, for instance, treat the z-axis as our initial axis of rotation, so that our initial normal is (0,0,1) × tangent, and then take things from there, but having that initial "mathematically correct" frame so that the initial normal seems to line up based on the curve's orientation in 3D space is just nice.

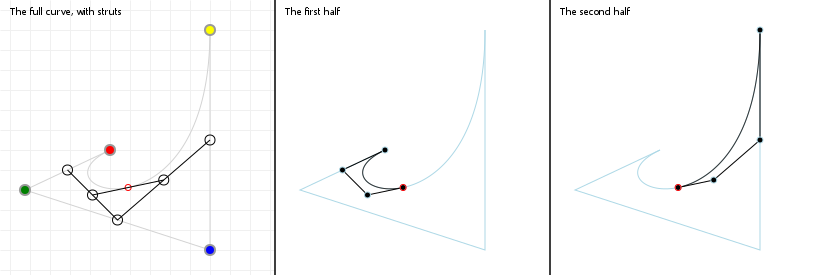

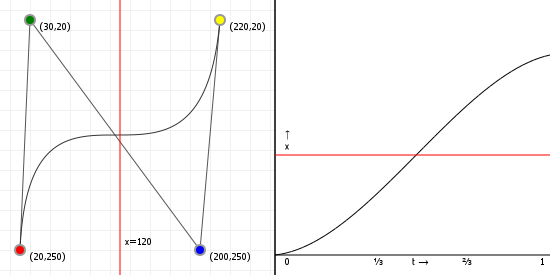

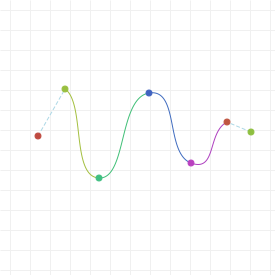

Component functions

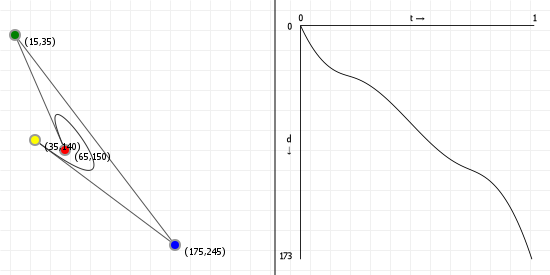

One of the first things people run into when they start using Bézier curves in their own programs is "I know how to draw the curve, but how do I determine the bounding box?". It's actually reasonably straightforward to do so, but it requires having some knowledge on exploiting math to get the values we need. For bounding boxes, we aren't actually interested in the curve itself, but only in its "extremities": the minimum and maximum values the curve has for its x- and y-axis values. If you remember your calculus (provided you ever took calculus, otherwise it's going to be hard to remember) we can determine function extremities using the first derivative of that function, but this poses a problem, since our function is parametric: every axis has its own function.

The solution: compute the derivative for each axis separately, and then fit them back together in the same way we do for the original.

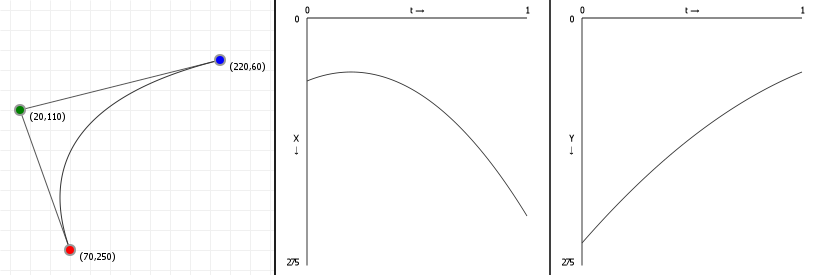

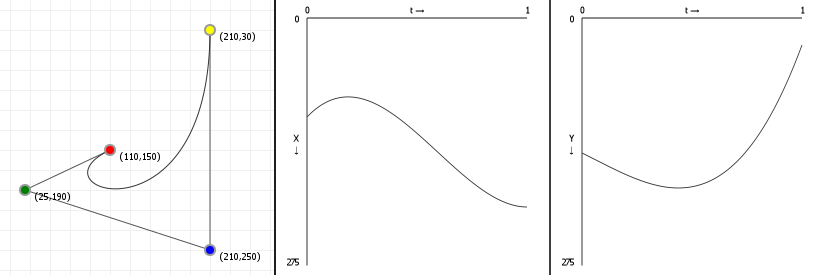

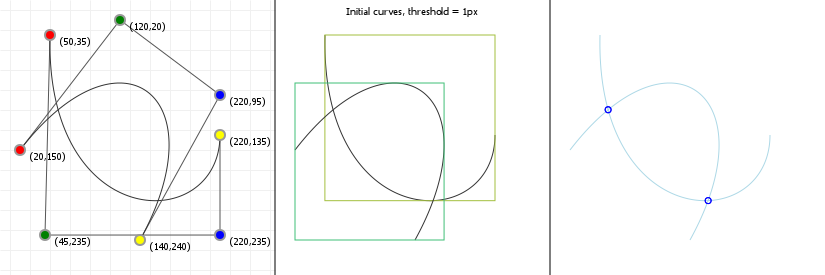

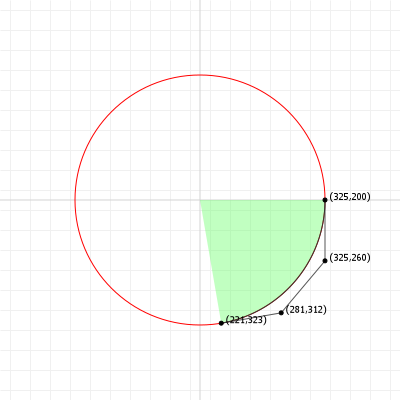

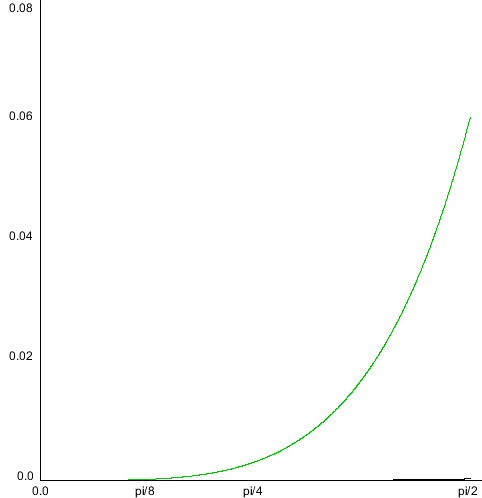

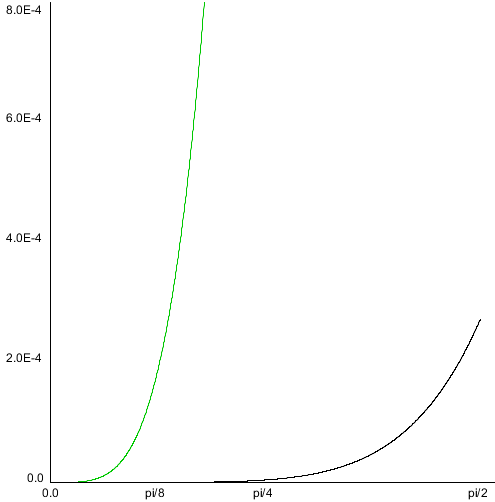

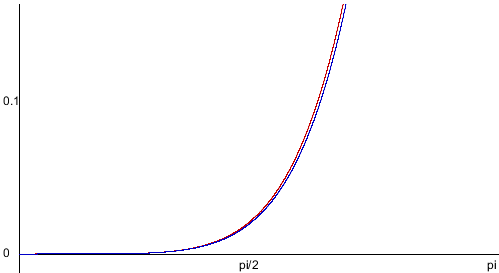

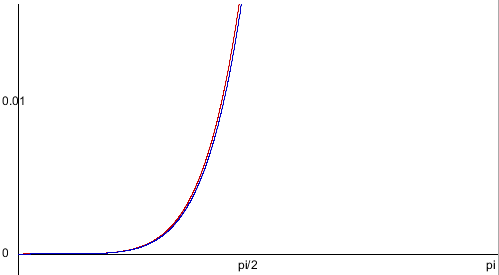

Let's look at how a parametric Bézier curve "splits up" into two normal functions, one for the x-axis and one for the y-axis. Note the leftmost figure is again an interactive curve, without labeled axes (you get coordinates in the graph instead). The center and rightmost figures are the component functions for computing the x-axis value, given a value for t (between 0 and 1 inclusive), and the y-axis value, respectively.

If you move points in a curve sideways, you should only see the middle graph change; likewise, moving points vertically should only show a change in the right graph.

Finding extremities: root finding

Now that we understand (well, superficially anyway) the component functions, we can find the extremities of our Bézier curve by finding maxima and minima on the component functions, by solving the equation B'(t) = 0. We've already seen that the derivative of a Bézier curve is a simpler Bézier curve, but how do we solve the equality? Fairly easily, actually, until our derivatives are 4th order or higher... then things get really hard. But let's start simple:

Quadratic curves: linear derivatives.

The derivative of a quadratic Bézier curve is a linear Bézier curve, interpolating between just two terms, which means finding the

solution for "where is this line 0" is effectively trivial by rewriting it to a function of t and solving. First we turn our

quadratic Bézier function into a linear one, by following the rule mentioned at the end of the

derivatives section:

And then we turn this into our solution for t using basic arithmetics:

Done.

Although with the caveat that if b-a is zero, there

is no solution and we probably shouldn't try to perform that division.

Cubic curves: the quadratic formula.

The derivative of a cubic Bézier curve is a quadratic Bézier curve, and finding the roots for a quadratic polynomial means we can apply the Quadratic formula. If you've seen it before, you'll remember it, and if you haven't, it looks like this:

So, if we can rewrite the Bézier component function as a plain polynomial, we're done: we just plug in the values into the quadratic formula, check if that square root is negative or not (if it is, there are no roots) and then just compute the two values that come out (because of that plus/minus sign we get two). Any value between 0 and 1 is a root that matters for Bézier curves, anything below or above that is irrelevant (because Bézier curves are only defined over the interval [0,1]). So, how do we convert?

First we turn our cubic Bézier function into a quadratic one, by following the rule mentioned at the end of the derivatives section:

And then, using these v values, we can find out what our a, b, and c should be:

This gives us three coefficients {a, b, c} that are expressed in terms of v values, where the v values are

expressions of our original coordinate values, so we can do some substitution to get:

Easy-peasy. We can now almost trivially find the roots by plugging those values into the quadratic formula.

And as a cubic curve, there is also a meaningful second derivative, which we can compute by simple taking the derivative of the derivative.

Quartic curves: Cardano's algorithm.

We haven't really looked at them before now, but the next step up would be a Quartic curve, a fourth degree Bézier curve. As expected, these have a derivative that is a cubic function, and now things get much harder. Cubic functions don't have a "simple" rule to find their roots, like the quadratic formula, and instead require quite a bit of rewriting to a form that we can even start to try to solve.

Back in the 16th century, before Bézier curves were a thing, and even before calculus itself was a thing, Gerolamo Cardano figured out that even if the general cubic function is really hard to solve, it can be rewritten to a form for which finding the roots is "easier" (even if not "easy"):

We can see that the easier formula only has two constants, rather than four, and only two expressions involving t, rather

than three: this makes things considerably easier to solve because it lets us use

regular calculus to find the values that satisfy the equation.

Now, there is one small hitch: as a cubic function, the solutions may be complex numbers rather than plain numbers... And Cardano realised this, centuries before complex numbers were a well-understood and established part of number theory. His interpretation of them was "these numbers are impossible but that's okay because they disappear again in later steps", allowing him to not think about them too much, but we have it even easier: as we're trying to find the roots for display purposes, we don't even care about complex numbers: we're going to simplify Cardano's approach just that tiny bit further by throwing away any solution that's not a plain number.

So, how do we rewrite the hard formula into the easier formula? This is explained in detail over at Ken J. Ward's page for solving the cubic equation, so instead of showing the maths, I'm simply going to show the programming code for solving the cubic equation, with the complex roots getting totally ignored, but if you're interested you should definitely head over to Ken's page and give the procedure a read-through.

Implementing Cardano's algorithm for finding all real roots

The "real roots" part is fairly important, because while you cannot take a square, cube, etc. root of a negative number in the "real" number space (denoted with ℝ), this is perfectly fine in the "complex" number space (denoted with ℂ). And, as it so happens, Cardano is also attributed as the first mathematician in history to have made use of complex numbers in his calculations. For this very algorithm!

| 1 | |

| 2 | |

| 3 | |

| 4 | |

| 5 | |

| 6 | |

| 7 | |

| 8 | |

| 9 | |

| 10 | |

| 11 | |

| 12 | |

| 13 | |

| 14 | |

| 15 | |

| 16 | |

| 17 | |

| 18 | |

| 19 | |

| 20 | |

| 21 | |

| 22 | |

| 23 | |

| 24 | |

| 25 | |

| 26 | |

| 27 | |

| 28 | |

| 29 | |

| 30 | |

| 31 | |

| 32 | |

| 33 | |

| 34 | |

| 35 | |

| 36 | |

| 37 | |

| 38 | |

| 39 | |

| 40 | |

| 41 | |

| 42 | |

| 43 | |

| 44 | |

| 45 | |

| 46 | |

| 47 | |

| 48 | |

| 49 | |

| 50 | |

| 51 | |

| 52 | |

| 53 | |

| 54 | |

| 55 | |

| 56 | |

| 57 | |

| 58 | |

| 59 | |

| 60 | |

| 61 | |

| 62 | |

| 63 | |

| 64 | |

| 65 | |

| 66 | |

| 67 | |

| 68 | |

| 69 | |

| 70 | |

| 71 | |

| 72 | |

| 73 | |

| 74 | |

| 75 | |

| 76 | |

| 77 | |

| 78 | |

| 79 | |

| 80 | |

| 81 |

And that's it. The maths is complicated, but the code is pretty much just "follow the maths, while caching as many values as we can to prevent recomputing things as much as possible" and now we have a way to find all roots for a cubic function and can just move on with using that to find extremities of our curves.

And of course, as a quartic curve also has meaningful second and third derivatives, we can quite easily compute those by using the derivative of the derivative (of the derivative), just as for cubic curves.

Quintic and higher order curves: finding numerical solutions

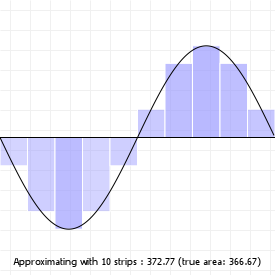

And this is where thing stop, because we cannot find the roots for polynomials of degree 5 or higher using algebra (a fact known as the Abel–Ruffini theorem). Instead, for occasions like these, where algebra simply cannot yield an answer, we turn to numerical analysis.

That's a fancy term for saying "rather than trying to find exact answers by manipulating symbols, find approximate answers by describing the underlying process as a combination of steps, each of which can be assigned a number via symbolic manipulation". For example, trying to mathematically compute how much water fits in a completely crazy three dimensional shape is very hard, even if it got you the perfect, precise answer. A much easier approach, which would be less perfect but still entirely useful, would be to just grab a buck and start filling the shape until it was full: just count the number of buckets of water you used. And if we want a more precise answer, we can use smaller buckets.

So that's what we're going to do here, too: we're going to treat the problem as a sequence of steps, and the smaller we can make each

step, the closer we'll get to that "perfect, precise" answer. And as it turns out, there is a really nice numerical root-finding

algorithm, called the Newton-Raphson root finding method (yes, after

that Newton), which we can make use of. The Newton-Raphson approach

consists of taking our impossible-to-solve function f(x), picking some initial value x (literally any value will

do), and calculating f(x). We can think of that value as the "height" of the function at x. If that height is

zero, we're done, we have found a root. If it isn't, we calculate the tangent line at f(x) and calculate at which

x value its height is zero (which we've already seen is very easy). That will give us a new x and we

repeat the process until we find a root.

Mathematically, this means that for some x, at step n=1, we perform the following calculation until

fy(x) is zero, so that the next t is the same as the one we already have:

(The Wikipedia article has a decent animation for this process, so I will not add a graphic for that here)

Now, this works well only if we can pick good starting points, and our curve is continuously differentiable and doesn't have oscillations. Glossing over the exact meaning of those terms, the curves we're dealing with conform to those constraints, so as long as we pick good starting points, this will work. So the question is: which starting points do we pick?

As it turns out, Newton-Raphson is so blindingly fast that we could get away with just not picking: we simply run the algorithm from t=0 to t=1 at small steps (say, 1/200th) and the result will be all the roots we want. Of course, this may pose problems for high order Bézier curves: 200 steps for a 200th order Bézier curve is going to go wrong, but that's okay: there is no reason (at least, none that I know of) to ever use Bézier curves of crazy high orders. You might use a fifth order curve to get the "nicest still remotely workable" approximation of a full circle with a single Bézier curve, but that's pretty much as high as you'll ever need to go.

In conclusion:

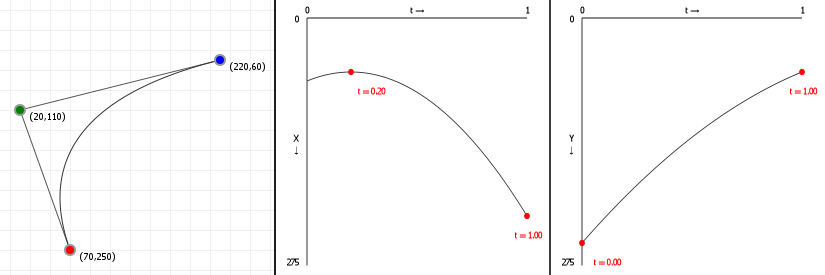

So now that we know how to do root finding, we can determine the first and second derivative roots for our Bézier curves, and show those roots overlaid on the previous graphics. For the quadratic curve, that means just the first derivative, in red:

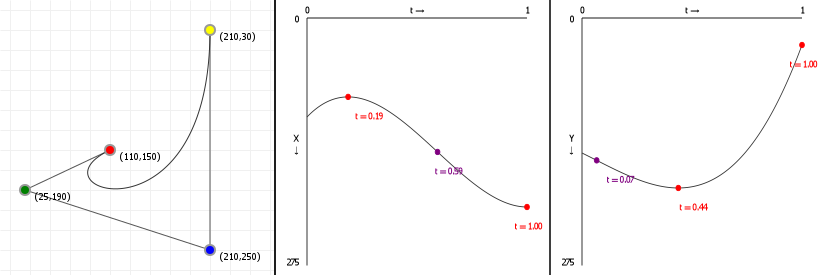

And for cubic curves, that means first and second derivatives, in red and purple respectively:

Bounding boxes

If we have the extremities, and the start/end points, a simple for-loop that tests for min/max values for x and y means we have the four values we need to box in our curve:

Computing the bounding box for a Bézier curve:

- Find all t value(s) for the curve derivative's x- and y-roots.

- Discard any t value that's lower than 0 or higher than 1, because Bézier curves only use the interval [0,1].

- Determine the lowest and highest value when plugging the values t=0, t=1 and each of the found roots into the original functions: the lowest value is the lower bound, and the highest value is the upper bound for the bounding box we want to construct.

Applying this approach to our previous root finding, we get the following axis-aligned bounding boxes (with all curve extremity points shown on the curve):

We can construct even nicer boxes by aligning them along our curve, rather than along the x- and y-axis, but in order to do so we first need to look at how aligning works.

Aligning curves

While there are an incredible number of curves we can define by varying the x- and y-coordinates for the control points, not all curves are actually distinct. For instance, if we define a curve, and then rotate it 90 degrees, it's still the same curve, and we'll find its extremities in the same spots, just at different draw coordinates. As such, one way to make sure we're working with a "unique" curve is to "axis-align" it.

Aligning also simplifies a curve's functions. We can translate (move) the curve so that the first point lies on (0,0), which turns our n term polynomial functions into n-1 term functions. The order stays the same, but we have less terms. Then, we can rotate the curves so that the last point always lies on the x-axis, too, making its coordinate (...,0). This further simplifies the function for the y-component to an n-2 term function. For instance, if we have a cubic curve such as this:

Then translating it so that the first coordinate lies on (0,0), moving all x coordinates by -120, and all y coordinates by -160, gives us:

If we then rotate the curve so that its end point lies on the x-axis, the coordinates (integer-rounded for illustrative purposes here) become:

If we drop all the zero-terms, this gives us:

We can see that our original curve definition has been simplified considerably. The following graphics illustrate the result of aligning our example curves to the x-axis, with the cubic case using the coordinates that were just used in the example formulae:

Tight bounding boxes

With our knowledge of bounding boxes, and curve alignment, We can now form the "tight" bounding box for curves. We first align our curve, recording the translation we performed, "T", and the rotation angle we used, "R". We then determine the aligned curve's normal bounding box. Once we have that, we can map that bounding box back to our original curve by rotating it by -R, and then translating it by -T.

We now have nice tight bounding boxes for our curves:

These are, strictly speaking, not necessarily the tightest possible bounding boxes. It is possible to compute the optimal bounding box by determining which spanning lines we need to effect a minimal box area, but because of the parametric nature of Bézier curves this is actually a rather costly operation, and the gain in bounding precision is often not worth it.

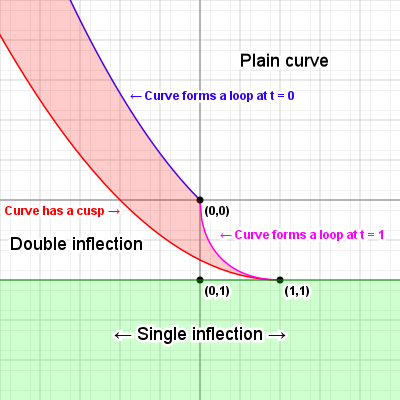

Curve inflections

Now that we know how to align a curve, there's one more thing we can calculate: inflection points. Imagine we have a variable size circle that we can slide up against our curve. We place it against the curve and adjust its radius so that where it touches the curve, the curvatures of the curve and the circle are the same, and then we start to slide the circle along the curve - for quadratic curves, we can always do this without the circle behaving oddly: we might have to change the radius of the circle as we slide it along, but it'll always sit against the same side of the curve.

But what happens with cubic curves? Imagine we have an S curve and we place our circle at the start of the curve, and start sliding it along. For a while we can simply adjust the radius and things will be fine, but once we get to the midpoint of that S, something odd happens: the circle "flips" from one side of the curve to the other side, in order for the curvatures to keep matching. This is called an inflection, and we can find out where those happen relatively easily.

What we need to do is solve a simple equation:

What we're saying here is that given the curvature function C(t), we want to know for which values of t this function is zero, meaning there is no "curvature", which will be exactly at the point between our circle being on one side of the curve, and our circle being on the other side of the curve. So what does C(t) look like? Actually something that seems not too hard:

The function C(t) is the cross product between the first and second derivative functions for the parametric dimensions of our curve. And, as already shown, derivatives of Bézier curves are just simpler Bézier curves, with very easy to compute new coefficients, so this should be pretty easy.

However as we've seen in the section on aligning, aligning lets us simplify things a lot, by completely removing the contributions of the first coordinate from most mathematical evaluations, and removing the last y coordinate as well by virtue of the last point lying on the x-axis. So, while we can evaluate C(t) = 0 for our curve, it'll be much easier to first axis-align the curve and then evaluating the curvature function.

Let's derive the full formula anyway

Of course, before we do our aligned check, let's see what happens if we compute the curvature function without axis-aligning. We start with the first and second derivatives, given our basis functions:

And of course the same functions for y:

Asking a computer to now compose the C(t) function for us (and to expand it to a readable form of simple terms) gives us this rather overly complicated set of arithmetic expressions:

That is... unwieldy. So, we note that there are a lot of terms that involve multiplications involving x1, y1, and y4, which would all disappear if we axis-align our curve, which is why aligning is a great idea.

Aligning our curve so that three of the eight coefficients become zero, and observing that scale does not affect finding

t values, we end up with the following simple term function for C(t):

That's a lot easier to work with: we see a fair number of terms that we can compute and then cache, giving us the following simplification: